When two worlds collide: exploring the overlaps and interconnectedness between Platforms and AI

In this short blog post, we explore the intersections between Platform & Ecosystem design, our exploration and design frameworks, and the vast landscape of Artificial Intelligence services and solutions. As a matter of fact, Platforms can benefit from artificial intelligence-powered tools and services, and on the other hand, the world of artificial intelligence services needs frameworks to spot, identify, integrate and orchestrate all the needed activities, components, processes that have to be designed and implemented, so as to have a fruitful adoption of AI technologies.

Luca Ruggeri

Andrea Valeri

Laks Srinivasan

This post has been written based on recurring conversations with Laks Srinivasan, co-founder and managing director of the ROAI Institute and Andrea Valeri, long-term contributor at Boundaryless and specializing in AI business applications.

Abstract

In this short blog post, we would like to explore the intersections between Platform & Ecosystem design, our exploration and design frameworks, and the vast landscape of Artificial Intelligence services and solutions. As a matter of fact, Platforms can benefit from artificial intelligence-powered tools and services, and on the other hand, the world of artificial intelligence services needs frameworks to spot, identify, integrate and orchestrate all the needed activities, components, processes that have to be designed and implemented, so as to have a fruitful adoption of AI technologies.

It’s our intention to consider this post as the opening one of a series of posts, that can explore vertically the various topics touched on here.

The fundamental message is that there’s a lot of ecosystem research still needed to better develop these frameworks for AI. So, we would be very happy and interested to involve everyone from the community who would like to contribute and be part of the project. Here, you can explore our partnership with ROAI on the topic.

In this preliminary and early stage, we have prepared a quick and simple survey, where you can get in touch with us, and explain your expertise or experiences in the adoption and use of AI solutions, especially in the overlap with Platform models and strategies.

Disclaimer:

- In this post we will use AI to broadly refer to automation, advanced analytics and Machine Learning (ML), but exceptions to this rule will be pointed out.

- This post aims at starting a conversation around the theme AI & Platforms. It’s not intended to be exhaustive and it’s not talking to the domain experts of ML, but the intent here is to involve them in a further series of posts: get in touch!

Subscribe to our newsletter if you don’t want to miss a thing!

Quick intro for AI experts: how we frame “Platforms”

The loyal reader of our blog knows that we define platforms not as technologies – they are not portals or websites – but they are first and foremost business models that are designed to amplify the weak signals of hidden value in a given platformization space, where this hidden or underutilized potential resides in entities and roles, and the platform strategy is the most efficient way to help unleash this value and leverage the opportunities in the long tail.

Platform strategies, often referred to as network orchestration or aggregation strategies, can transform the “existing, industrial and not based on relational value” value chain into strategies based on the principle of interconnectedness where entities and roles in the supply side are connected to entities in roles in the demand side. Platform strategies are intentionally designed to reach exponential growth due to the establishment of Network Effects: this is possible by exploiting the three value creation contexts – marketplace, support services to producers and consumers of value, extension platform patterns – offered, developed and supported by the platform aggregation services, in resonance with the strongest expectations and capabilities that reside in the ecosystem entities.

The relevant consequence of this very short and over-simplified description of the Platform Strategy (developed through the phases of exploration of opportunities and then by the design process), is that since the secret sauce is about detecting the systemic outcomes looked after by the key entities and provide better channels of interaction and supporting services (learning services in our definition) that can help them become better, more efficient and more proficient at the value creation and exchange between the two sides – supply and demand – most of these services and optimizations can also benefit from the digital domain and in our thesis here, from AI-based services.

As we said, Platforms are not technologies to their first extent, but emerging technologies can be really helpful – even if they can introduce other layers of complexity – or for platform shapers, other layers of opportunities – In the next sections we will expand how AI can help optimize the business processes, the transaction channels, the matching and recommendation algorithms, etc…

Quick intro for Platform experts: how we frame “AI”

Tom Davenport, Chairman of the RoAI Institute in his book “The AI Advantage” says this about AI Technologies “employ such capabilities – previously possessed only by humans – as knowledge, insight, and perception to solve narrowly defined tasks.” We believe the greatest challenge with AI in the enterprise is not primarily technical but rather the path to value – leadership, organization, talent, culture, governance, analytics-ready data, etc. While the challenges in adopting AI vary from company to company, there are some common barriers:

- AI Misunderstanding – A lack of enlightenment around what AI can and cannot (or should and should not) actually do, especially at senior levels

- Missing AI Strategy – No specific set and sequence of potential solutions aligned to customer & business needs

- “Business-as-usual” Mentality – Not keeping up with business model innovations and disruptions and business experimentation

- Lack of Data-Driven Culture – Data not treated as a strategic asset – data may be disparate and analytics a minor consideration

- Technical & Data-Product Talent Gap – Ill-defined roles, minimal advanced skills & tooling, and/or siloed technology teams

However, there are a number of companies that have been able to surmount these challenges to deploy AI at scale. You can learn about the strategies and approaches of those AI Leaders in this MIT SMR webinar where the RoAI Institute presented findings from a research study on “Critical Success Factors for Achieving ROI from AI Initiatives”.

The combined Boundaryless and the RoAI Institute team have experience in over 85 platform projects and 500+ AI projects. We find that through research and our combined experience that most companies in their AI Journey settle for tactical applications of AI and therefore miss out on strategic returns from AI such as AI-driven platform business models. On the other hand, we find that the platform companies have not adopted basic AI capabilities to maximize ecosystem value generation. Finally, the AI ecosystem is dominated by a few major consulting/technology companies, resulting in a long-tail of companies on the demand and supply-side.

Therefore, Boundaryless and the RoAI Institute are partnering to democratize AI to the broader platform and AI ecosystems. There are synergies, cross-pollination opportunities between AI adoption strategies and platform strategies, and both domains can be mutually beneficial. This initial, propaedeutic blog post is a starting point to explore these mutual interactions. And, truly believing in the ecosystem approaches, we absolutely need everyone’s support in the exploration and validation of our assumptions around this thesis that interconnects AI and Platform Design.

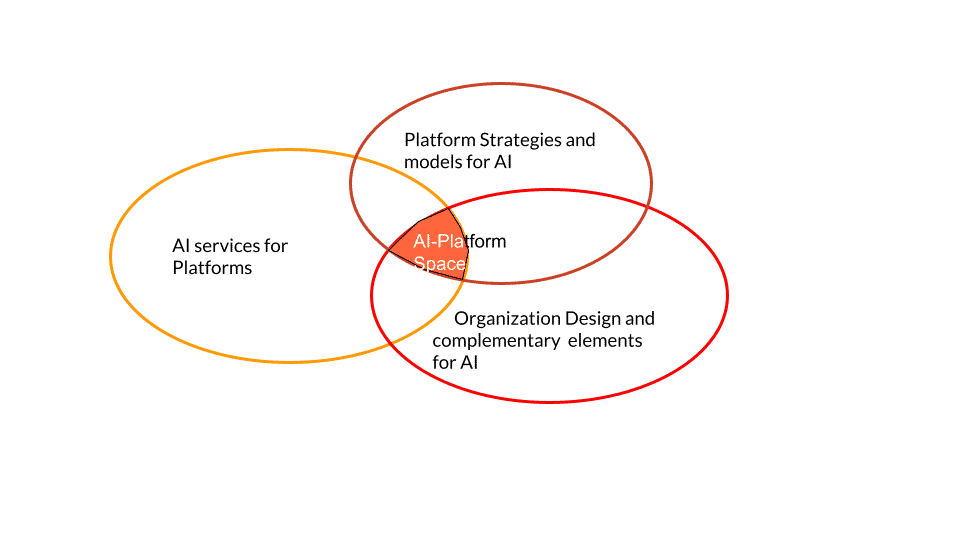

AI and Platforms cross-domain space

So, when coming to the intersection of AI/ML and Platforms, we can envision the following three main areas of influence (we will expand them in the next paragraphs):

1. AI services, for Platforms

2. Platform strategies/models, for the AI ecosystem

3. Organization design elements for AI, including the intangible complementary framework parts needed for large-scale adoption of AI

Let’s expand the list above, with more details and insights.

AI-based services for Platform strategies

This first case is increasingly adopted in more and more platforms. The reason for that is simple: a loyal reader of our blog knows that Platform strategies help and support entities and roles in the ecosystem to perform at their best by providing “learning services” as a powerful tool to deal with complexity and improving the capability to find resources, connect with the right counterpart, reduce paperwork, provide automation to common business processes.

The learning services offered by Platforms guide the key roles on the supply side and on the demand side through stages 1. onboarding, 2. getting better, 3. catching new opportunities with the purpose of helping producers of value (but also consumers of value) to perform at their best, to become more proficient, to emerge from the crowd, to perceive the platform as an ally in their daily routines.

Typical services and features that belong to the onboarding “challenge” entities and roles have to deal with, are:

- Orientation among the offering

- Learning which possibilities are reachable through the platform

- User profiling, to be capable of providing tailored solutions

- Categorizing needs, associating labels coming from the domain ontology so as to make the resources searchable and actionable

- Other “deep tech” special needs that are vertical niche/domain dependent, that can be even more based on deep tech AI solutions. As examples, computer vision to scan and verify documents, deal with natural language processing to interact with users or to transcript audio or visual contents into machine readable ones, etc.

Services and features that match the needs of getting better and also of catching new opportunities, are for instance:

- Automatization of processes, such as document recognition and management (i.e. invoicing, etc.)

- Data intelligence, business intelligence, insights generation and visualization

- Dashboarding, actionable intelligence

- Matching, categorizing, demand-offering association

- High Throughput process managers, using AI to support a large number of leads (calendar and booking, ERP and CRM connectors, IFTTT smart systems, etc.)

- Marketing intelligence, validation testing, growth hacking, A/B and bandit arm testing, etc.

The functions and services listed above (just examples, without any claim of completeness) match perfectly with some of the present capabilities of Machine Learning services. In fact, validated uses with proof of effectiveness of AI/ML applications include:

- Recommendation systems

- Sorting and matching algorithms

- Profiling, with or without known ontology (i.e. deep learning)

- Document processing

- Computer vision and automatic management of images, scans, documents

- NLP and speech recognition: interacting with users, reading text or transcription of speech, extract word maps and meaning maps

- Data intelligence, OLAP multidimensional cubes reduction and interpretation, data visualization, predictions

- Decision support systems

- Pattern recognition, fraud detection, identity profile creation and verification

- Extraction of sorting and classification ontologies and heuristics, useful to understand parameters to categorize information, entities, etc.

So, there are now many examples of such services, AI-enabled or -powered, that are serving platforms. It’s not a mystery that Facebook, Apple, Google, Microsoft, Netflix, (the FAMNG big platforms) have extensive AI departments, and are at the forefront of AI studies and technologies, since the Proprietary Tech and the Data Capture Optimization Capabilities Building Based Reinforcing Flywheels (CBRF) are strong strategies used to increase the defensibility of platforms. And let’s also mention all the current technologies at the forefront of, for instance, medical applications and healthcare-related services, like the pioneering IBM Watson as a DSS – decision support system for doctor’s diagnosis.

Our point here, to reconnect with the Platform & Ecosystem design, is that none of the services described above can be deployed in a generalized, agnostic flavor in platform services, or at least this is not possible at the current technological maturity level. ML developers and data scientists need to tune the tools and models basing their analyses on the domain primitives that constitute the scope of application. The more the data used for training the models, the desired outcomes, the reinforced learning patterns are clearly defined within the application domains, the higher the quality of datasets, and the resulting quality and reliability of the trained models. AI experts need to have a clear understanding of the real and reachable objectives they are requested to match, and they need to understand what processes, tools, and resources (think data!) their ML models will be integrated with, so, in practice, they depend on the domain model of the application.

Complexity jumps in when you realise that very few elements, necessary for a full understanding of this domain, depend purely on the organization that is looking for ways to optimize and improve their streams and are so evaluating the adoption of AI services. Most (if not all) of the elements really impactful and relevant for designing AI service, are defined and described at the ecosystem level, in (and thanks to) the interactions among entities and roles (people, organizations, other systems).

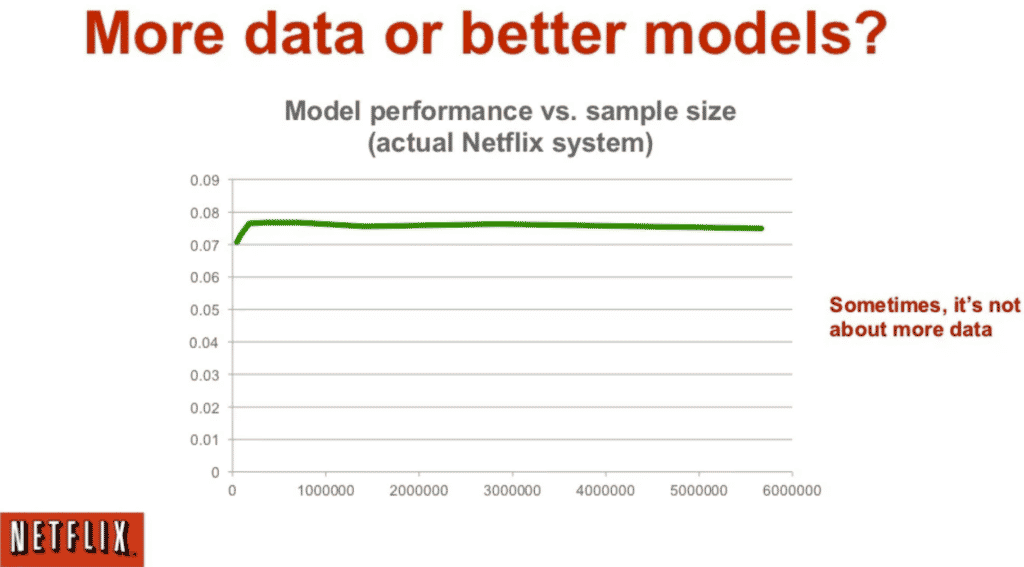

For instance, Netflix regularly issues a Prize for those who can demonstrate that model quality is much better, in terms of performance, than having a lot of de-contextualized data or metadata. As a joke, this Prize was a kind of answer to Norvig’s famous quote “Google hasn’t better algorithms, it only has a lot more data than others” (of course, the truth is in the middle). You can also give a look at this study, Recommending Movies: a few ratings are more valuable than metadata, that explains how contextual data, relevant for the domain of application, gives better performance. In the example given, fewer but good “movies ratings” coming either directly from the users who will then get the new recommendation, or from like-minded users that have been clustered in the same cohort group of the target users (ecosystem interaction) have a dramatic positive impact on the quality and final appreciation of the recommendation.

Source: Xavier Amatriain on slideshare

Platforms (network orchestrators and aggregators models) can be really useful to provide this domain model framework, to contextualize the interactions among entities and roles, identify the data and value units exchanges, and provide a defined space, a platform-ecosystem domain, where the AI/ML model can be developed, studied, trained, applied, and then verified continuously for monitoring the coherence, the accuracy, the performances.

Contextualization of the domain model and identification of the “business primitives” is also a very good idea to enable the ecosystem to further evolve and develop AI/ML services, by providing the “solution developers” with enough rich information so that they can decide independently and autonomously on what and how needs to be implemented, and why/for whom. It’s a necessary condition to foster the long tail exponential growth leveraged by Platform strategies. If this condition is missing, all the responsibilities of developing features and evolving them is on the Platform Owner, bringing in more costs, poorer validation, and risk of ecosystem misalignment.

We also wrote an old post about the need for contextualized data (Platforms and the myth of Data) in Platforms – intended as orchestrators of distributed and fragmented entities in the arena – or as Nora Bateson defines them, warm data: you might be interested in reading it, to understand what data are relevant for the different layers of interactions that happen in an ecosystem and that, if supported by a platform strategy, can lead to interesting applications like the data-driven decision making, the regulation by accounting, etc.

In this scenario, AI and Platforms can be mutually beneficial. AI needs context, while Platforms can benefit from, as an example, the capability of ML models to extract and derive the heuristics and the KPI/metrics from the measured data, explicating a new layer of knowledge from the served arenas, or benefiting from the incredible efficiency and throughput capacities of all the AI powered services.

Platform aggregation models and strategies, to serve AI ecosystems

Before diving deep into Platform verticals at the service of AI we should consider that the business case “design and execute a Platform Strategy” is one of the most important drivers that trigger the adoption of AI in organizations and vice versa. Platform strategies are designed in and for complexity and so do most of the AI services. Most – if not all – of them need strong personalization, and then also continuous fine-tuning and updates: and this is the perfect ground for Platform strategies, especially to leverage the ecosystem entities to update AI services and expand their capabilities with the integration and extension patterns.

So, Platform Strategies are a must for all the organizations that want to position themselves in complex and articulated contexts, especially if they are in the space of technology and digital services. As James Currier, founder and CEO of NfX Guild Venture Capital, the Platform interfaces are so efficient that either you design and run your platform strategy or you’ll become a player in someone else’s strategy.

Coming back to our vertical second scenario, we are exploring how platform models can support the ecosystem of AI “needs”.

Oversimplifying the picture, we can say that AI/ML will need, or benefit from:

- Data lifecycle management: from the data generation and collection to the data annotation and preparation of training datasets, to the datasets used to verify the coherence of ML models

- Emersion of opportunities, challenges, technological development, and technological transfers of competencies and IPs, to stimulate the development of innovative solutions

- Experts’ engagement: a lot of ML services strongly rely on humans in the middle. We need persons for the preparation of the training datasets, for running the training or reinforced learning of ML models, we need data scientists to think, build and validate new models and algorithms, and we also need systems to verify the transparency or the responsibility profiles of AI services. And let’s also mention the many machine learning experts, developers, that are necessary to deal with the integration and customization of AI services into the business workflows of their client companies

- Training and education, case history management, culture transformation, etc.

- Legal frameworks, for instance, to manage access to data, their ownership, the possibility to share data across trusted entities, or frameworks that can impact the governance of AI solutions. If we think about the large themes of transparency, explainability or responsibility of AI, or the liability of AI and how to deal with managing mistakes and errors, or who will respond to potential damages caused by AI services, Platforms can provide the right ground for addressing these issues and let the ecosystem manage adaptive solutions.

The above mentioned are just a few examples of all the intermingled overlaps between AI needs and Platform models. Platforms can for instance support the AI ecosystem in:

- Orchestrating labour marketplaces, to manage the man-in-the-loop and the crowdsourcing leverage of human resources in the critical phases of AI models, among which experts and data scientists’ attraction, or having enough workforces to verify and annotate a lot of data to create training datasets, verification datasets to counterbalance the models’ biases, manage the interactions between AI artifacts and the experts that are contributing to the value chain;

- Domain model description, identification of the business primitives and of the business models, and adoption patterns for the solutions. This point has already been described in the previous paragraph, but here the focus would be on the evaluation of all the ancillary elements that are necessary for the real adoption of AI solutions, and that often is ignored or underestimated, and this latter fact could make the difference between a satisfactory use of AI in organizations and optimal use of the resources, or projects that wind down quickly, wasting the resources. Guiding the process to understand the objectives, evaluating the assets and capabilities already exploitable in the company, and then understanding and planning what is necessary to change and develop before AI/ML solutions can be applied, is a real value of platform approaches.

- Platform development patterns for AI can be applied both to developing new and better AI services, and more frequently to help existing AI products and services to scale. As an example, think of the deployment of AI models or algorithms in running hardware devices: a real multidisciplinary and complex process. Or think of the problem of accessing the infrastructures needed to train large models (from LaMDA, recently at the center of a sci-fi love affair, or BERT, PALM, or in general the need for general, foundational base models, that needs huge resources for their development. Microsoft and Nvidia planned to invest more than $1bn on new models). Boundaryless performed an interesting study, within the EOSC – European Open Science Cloud on the distributed access to computational resources for scientists and member states in EU, not specifically on AI but interesting in the governance model.

- Last but not least, if you’re familiar with the three value propositions of Platform models, we can support the AI in expanding the possibilities through Extension platforms patterns, to enhance the features, algos, models, data, frameworks, use cases, templates, and blueprints, connectors, API of AI-based services and processes. These elements improve, with an ecosystem leverage, the features available, but also the future-proofness and the evolutionary management of AI resources. Involving the ecosystem entities in the long-term development of AI solutions and features is key to enabling the long tail in capturing the unknown-unknown needs and opportunities emerging in real networks (aka “only the end users and adopters can drive the mass personalization of end solutions).

In a nutshell, platforms can represent both the set of use cases and the strategic framework to guide and foster the adoption, development, and management of AI solutions.

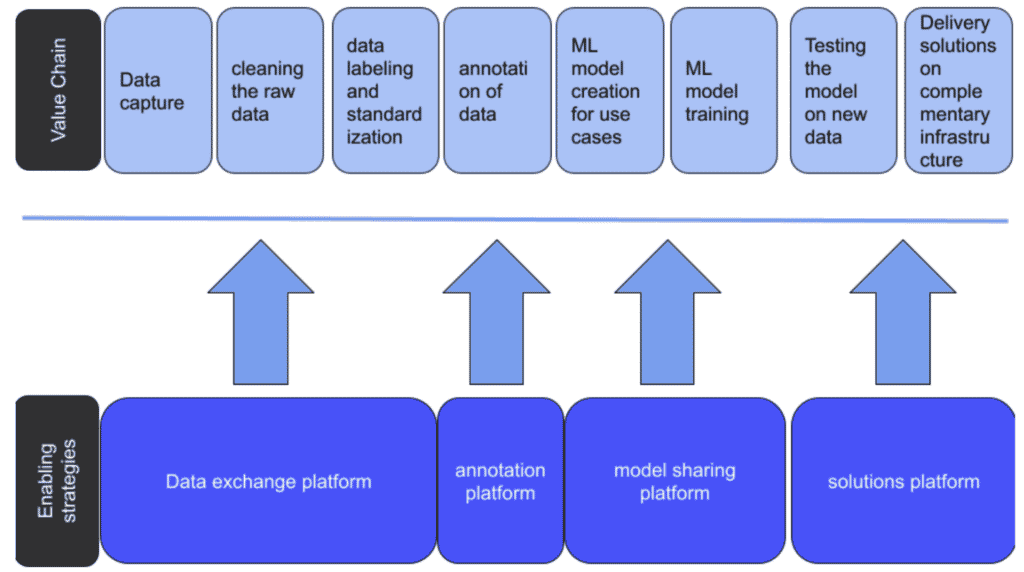

As an example, to describe in practice these elements, let’s consider the data lifecycle in a typical process around ML solutions:

Practically all the value chain stages require support from ecosystem entities and men-in-the-loop approaches. From the data generation and capture to the definition of the right ontologies and categorization of the data and metadata to turn them into something that is machine readable and actionable and can feed ML models (with the annotation process), and then the ideation of the models and their training to finally having the trained model that can be applied to raw data in the real and final use, there are multiple possibilities for interaction with different players, roles and entities in the ecosystem.

Platform models can provide the grounds for smart contracting and data unions (data sharing mandates) and this can be functional to AI since you will establish a long tail, exponential growth pattern to prepare datasets for both the training and the application of AI services. There are great examples of these approaches both in the Web2 or institutional and in the Web3 domains. You can refer for instance to Dimo or Streamr (a data aggregation solution that relies on tokens to distribute ownership and profits when data are monetized) on the decentralization side, or this interesting initiative from the EU Commission on data sharing mandates, to provide a legal framework that complies with the data protection, without blocking AI solutions.

Designing the overall strategy around AI solutions can make the difference between a thriving ecosystem and an overhyped, disappointing and deceiving one. Some of the value chain elements should also be addressed at ecosystem, institutional level, that leads us to the third point below.

Organisational, institutional, structural and governmental elements for AI

Even if we referred to AI as ML mainly, the domain of AI is really huge. It can be compared to large-scale technologies like telecommunications, electricity, and so on. Being such a complex and vast ecosystem, there are multiple layers that need to be addressed.

We’ve very partly said something on the “tech/solutions layer” and on the “relational, long tail based value creation and exchange” layer in the previous paragraphs.

The third layer is probably even more important when thinking of impacts since it’s composed of all those elements that can cover:

- Ethical frameworks

- Governance of AI (deployment models, maintenance and evolutionary models, education, training and upskilling, case history, etc.)

- Impacts and liabilities of AI (accountability, liability, transparency and explainability, responsible AI)

So, the key questions and design primitives in this layer are meant to address the following areas:

- Describe the application arenas of AI solutions: where do they make sense? Where do we want to avoid AI? This point connects with the general discussions about ethics, responsibility, liabilities, privacy and data protection, sensible data, etc. Auditing AI solutions will become more and more needed and frequent in the near future, since more and more people will be exposed to AI services, or at least they will become more aware of the implications of such services;

- Governance of AI: how can I procure innovative AI solutions, who can invest, and who can maintain them? What legal frameworks are applicable? How is the responsibility among the AI solution developer, the owner, and the users (especially for those models that are evolutionary since they adopt a reinforcement learning pattern) distributed?

- Long-term impacts of AI solutions: are they helping us to solve problems, or are they amplifying some or all of our “biases”? Will the final measurement of impact be positive or negative? How can I measure the impacts, how can I define the KPIs for a successful AI solution? Platforms can offer a scalable way to risk management, liability management, and monitoring.

Some of these themes, issues, or opportunities at this layer, match with unsolved discussions among multidisciplinary experts: not only data scientists but also attorneys, legal specialists, philosophers, sociologists, and experts on the impact of emerging technologies.

A couple of open questions, just to contextualize what is stated above. Who is responsible for a bias in an AI model that maybe puts at risk some categories of people, or prevents doctors from being independent in their diagnosis? Is it the original developer of the solution only, or do responsibilities need to be proportionally shared with those who trained the model or prepared the annotated dataset (maybe involuntarily introducing biases), or in the case of evolutionary models, is also impacting who, by using it, contributed to its – wrong – evolution? Is it maybe that the responsibilities have to follow the same scheme that is, for example, applied in the civil infrastructure building: in case of a major issue, not only the initial designer but also the civil work company, the maintenance squads, everyone who is co-responsible in the maintenance and keeping of common goods and properties, are considered involved?

And, given that for most of ML and deep learning models is very hard if not impossible to understand the end-to-end process followed by the algos in the decision-making process, it seems impossible to satisfy the regulations like the EU GDPR, thus preventing the entire applicability of ML/DL services: surely granting privacy, but also slowing down some innovations. What if instead of requesting full explainability, a platform framework can be used to assure that the solution is a certified responsible AI, and it’s always possible to track coherence, verify results, and declare the input and output artefacts used at every iteration.

Please note that, despite the very good results and the efforts put by great initiatives like OpenAI (that is working hard to explain in detail how their computer vision CLIP model works under the explainable AI paradigm), here we are focusing more on the impacts of these services at scale in the society, i.e. “AI at the service of citizens” where these issues should be addressed not at a hard technical level, but at an institutional level.

The point on ethics is really hot. Since the capability of developing bias-free AI solutions goes beyond humans, what we can only do is try to reduce the negative impacts of such biases. We need to be as sure as we can, that AI solutions in real ecosystems are improving the overall quality and fairness of the value exchanges, and are not instead amplifying our weaknesses. We recently recorded a very interesting podcast, with Otti Voght and Antoinette Weibel that touches on this consideration.

Platform models, from the perspective of ecosystem orchestrators and guidance patterns, with their ramifications on governance, and hub of interactions, can be a real advantage to address most of these problems, especially by institutionalizing and historicizing what happens in the use of AI services. And with the incoming Web3 protocols and models powering up distributed and decentralized ownership and value among the engaged ecosystem players, Platforms will have more and better tools to intentionally address open issues. Here, an interesting blurb on some of the most promising overlaps: AI for Web3 security, DAPPs, and Smart Contracts, better protocols and scores calculated automatically, true data-driven historicized approaches…

And who knows, somewhere in time AI services will be considered as peer entities under certain conditions…

What’s next

Boundaryless and ROAI Institute are exploring the space where AI and Platforms overlap (see here the partnership page), since we believe that there are several areas where we can impact, and provide the ecosystem with many valuable elements, such as:

- Helping company leaders to develop AI fluency and intuition

- Helping organizations to address the complexity of adopting AI in their processes

- Providing rules, patterns, best practices on how to involve and interconnect experts, resources, other AI platforms

- Explicit the transformative impacts of AI solutions on platforms and value chains (i.e. new Platform Plays, focused on the impact of AI)

- Describe the domain models to be considered in the various declinations of AI solutions

- Explore the impacts of large-scale adoption of AI

- Define a venture building process to support AI-focused projects in their development as, and thanks to platform models

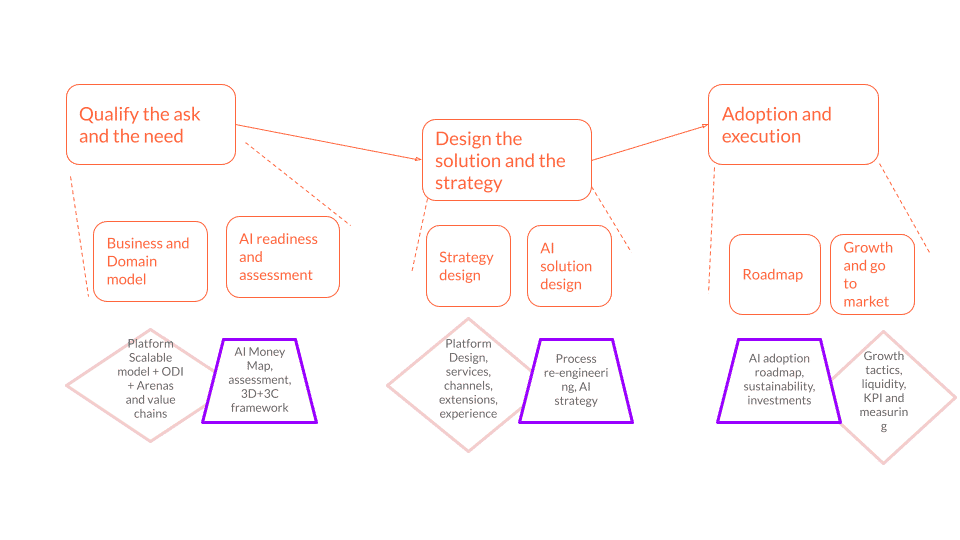

How can the specialistic expertise work in synergy, between Boundaryless and ROAI, in guiding the adoption of AI solutions by interested parties?

We are researching and developing a simple scheme like this:

Boundaryless POE and PDT can be used to describe the domain model in the qualification of the ask and the needs, relying also on the ODI – Arenas Scan process, while ROAI’s toolkit consists of 3D+3C framework, AI Readiness diagnostic and AI MoneyMap tools can help to quantify and prioritize the opportunities.

The PDT and the growth product model frameworks are focused on the design of the experiences to be validated and on the process optimizations, while ROAI’s AI roadmap with the Governance model is key to planning the execution and go-to-market phases.

The fundamental message is that there’s a lot of ecosystem research still needed to better develop these frameworks for AI. So, we would be very happy and interested to involve everyone from the community who would like to contribute and be part of the project.

In this preliminary and early stage, we have prepared a quick and simple survey, at the following link, where you can get in touch with us, and explain your expertise or experiences in the adoption and use of AI solutions, especially in the overlap with Platform models and strategies.

Thanks for helping and connecting with us!

Before You Go!

As you may know, everything we do is released in Creative Commons for you to use. In case you’re getting value out of these reads and tools, we encourage you to share with your friends as this will help us to get more exposure, and hopefully, work more on developing these tools.

If you liked this post, you can also follow us on Twitter, we normally curate this kind of content.

Thanks for your support!

Luca Ruggeri

Andrea Valeri